AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Memory barrier cache coherence1/22/2024 After all, the pointed-to value is guaranteed to remain unchanged as long as the &u32 exists.

just pass the value rather than a pointer. It would be nice if the compiler could represent &u32 in memory as if it were just u32, i.e.

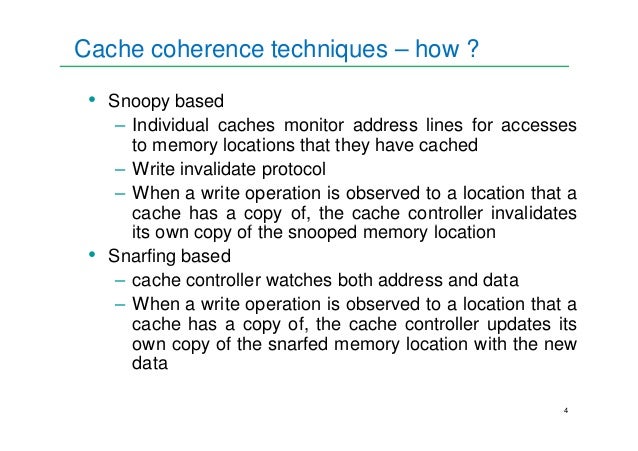

(edit: replaced asterisks with “” since HN’s Markdown has no way to escape them.)Ĭritically, Rust references have pointer identity: you can convert between references and raw pointers, and if you convert a const u32 to &u32 and back to const u32, the pointers are guaranteed to compare equal. In reality, the optimizer can always remove indirections when it can prove that doing so doesn’t affect visible semantics, but that applies equally to raw pointers and references. Yes, they are – well, as much as raw pointers are. But if you asked me ten years ago I'd have said they wouldn't have made it to 56 cores, either. It's hard to imagine their current methods scaling to 1k cores. Or Intel may start to allow developers to relax memory correctness guarantees on a process by process granularity in their own progress to high core counts. That redis fork that adds threads maybe not. The other way it might play out is a race to very high core counts as the main differentiator, providing single socket performance worth the hassle of not being able to run everything on it in a rock solid way. There's still plenty of room to undercut Intel's historical 60% gross margins even if you're shipping largely interchangeable products. It may sacrifice some of their advantage but avoid being known as the CPU equivalent of a Ford Pinto. I agree that it will be a large power penalty, but the alternative may end up being the long tail of software suffering subtle concurrency correctness issues that just aren't there on x86. I just wanted to reassure myself that my understanding was correct and further perhaps help someone not seeing this issue (as this is a very easy trap to fall into). The assertion should rather be:ĭon't get me wrong, I think the blogpost is a great explanatory article about memory ordering and the example is rather contrived. I made a small playground which demonstrates the segfault. This is due to the use of `slice::from_raw_parts` where `self.samples` is left as a `usize` and hence takes a much bigger slice than what was allocated (the leftover of the truncate operation). Further by using a number that's bigger than a `u32`, this example contains undefined behavior. I also found this page in the nomicon about casting: ĮDIT2: As I thought casting a `usize` which is 64-bits to a `u32` causes it to be truncated and hence the assertion is always true. Isn't the following statement always true, as casting using `as` will silently ~~overflow~~ truncate the `u32` if `usize` is 64-bits?ĮDIT: I know it's a contrived example, but I was just curious if my understanding is correct. If anyone is aware of a case where the hierarchical thread cache model makes a prediction different from the reordering-based model, I would love to hear about it. I think that you would have to construct a somewhat of a clever case in order for the cache hierarchy to make a difference, though. To be even more accurate, you should assume that the thread caches can form an arbitrary large hierarchy, and so you cannot assume that a read is served only from two possible places – main memory or the thread’s cache. The thread cache model is also accurate for most intents and purposes.

I find the explanation based on imaginary thread caches more intuitive than the more commonly used explanation based on operation reordering. NET memory model, and is based purely on publicly available information. This blog post reflects my personal understanding of the. Is the entire imaginary thread cache flushed to main memory after the operation?.Is the entire imaginary thread cache refreshed from main memory before the operation?.New Thread(() => regionįor each operation, the table shows two things: This program never terminates (in a release build): For example, if one thread updates a regular non-volatile field, it is possible that another thread reading the field will never observe the new value. The memory model defines what state a thread may see when it reads a memory location modified by other threads. The memory model is a fascinating topic – it touches on hardware, concurrency, compiler optimizations, and even math.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed